Pausing isn't policy

Bernie Sanders wants a moratorium on data center construction. The political calculus makes sense, but the policy prescription does not.

You're receiving this from Dagger, Asterisk's new home for timelier takes, short essays, and the occasional provocation between our quarterly issues. If you’ve signed up for our Substack, you're already subscribed — we hope you'll stick around.

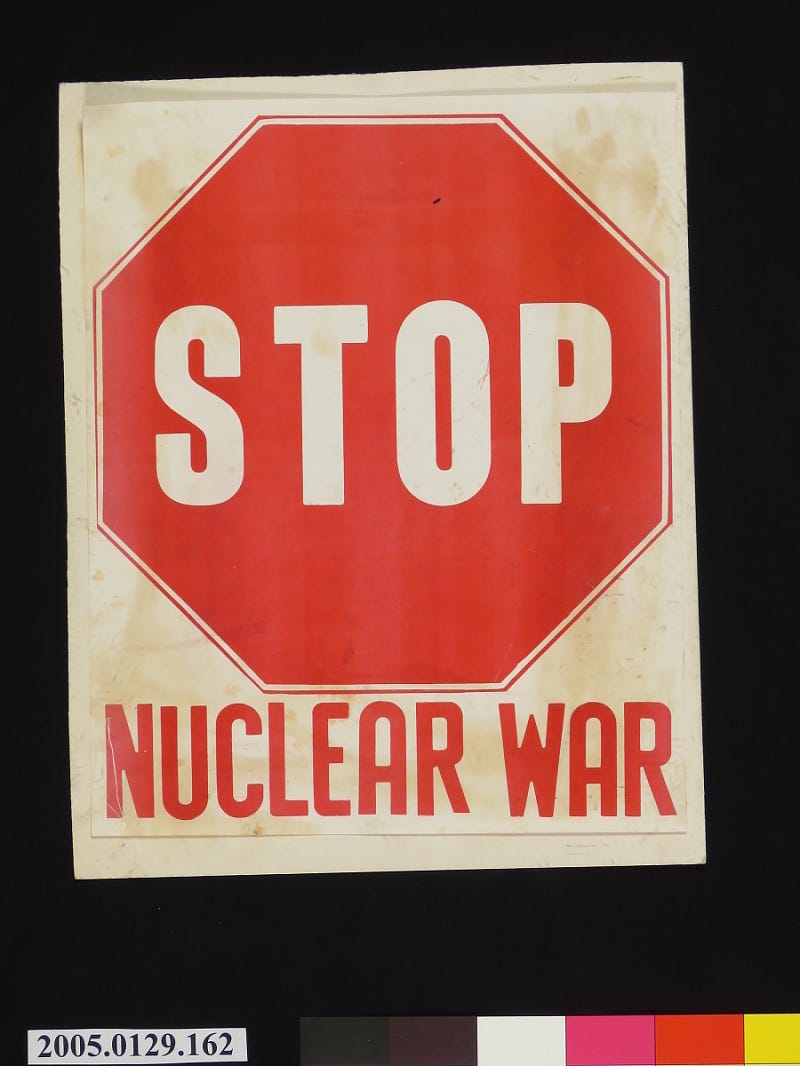

In October 1982, then-Burlington Mayor Bernie Sanders wrote a letter to President Ronald Reagan warning him that nuclear weapons could “destroy all life on the planet” and imploring him to “think courageously and boldly” toward ridding the world of these weapons. Now, in what is likely his last term in public office, Sanders is reprising the existential rhetoric he once brought to nuclear war — this time, for artificial intelligence.

Sanders has recently introduced legislation for a federal moratorium on data centers. Data center moratoria have been introduced in at least 12 state legislatures, so he’s not the first person to do so. But he’s one of the few to ground such a proposal in AI safety terms, and I don’t think that framing is spurious. Sanders’ recent work on AI labor displacement — and his conversations with AI thinkers such as Geoffrey Hinton and Eliezer Yudkowsky — evince a sincere concern for the immediate and long-term risks of AI.

In data centers, Sanders imagines he’s found a vehicle for broader AI governance ambitions. These are visible, literally concrete structures that play on a trifecta of grievances: rising electric bills, anxiety over AI and its corporate creators, and environmental concerns.

However, the bill text is more scattershot than his AI safety messaging suggests. His proposed moratorium would continue until Congress enacts laws requiring pre-release government review of AI products, policies to prevent job displacement, protections against electricity rate hikes, community veto power over data centers, a ban on subsidies for data centers, and union labor requirements. Whatever one thinks of any of these individual items – taken together, it reads less like an AI governance framework and more like a progressive policy grab bag.

I share many of Sanders’s concerns about unchecked AI development, but a pause is not a substitute for actual AI governance. And attempting to address many distinct problems – from safety risks, to labor displacement, to utility costs – through a single, unwieldy solution merely makes it less likely that any of them get addressed. Even taken on its narrowest terms, the moratorium would not achieve its goal.

There’s no plausible path to data center non-proliferation

A data center moratorium is an attempted double-bank shot at AI safety – regulating buildings, in order to constrain compute, in order to pause capabilities. But even if it slowed growth at the margins, it would not be sufficient to constrain AI’s development.

Nuclear nonproliferation has been fairly effective because weapons programs depend on rare materials and other controllable chokepoints. Data centers merely require land, electricity, cooling, fiber, and chips. Some of those inputs can be limited at the margin, especially the most advanced chips. But the basic physical infrastructure of compute is quite fungible.

When Singapore paused new data center development between 2019 and 2022 over energy and land use concerns, the regional buildout of compute continued regardless. Microsoft, Google, and AWS have collectively committed billions across Malaysia, Thailand, and Indonesia. Meanwhile, the Gulf states are treating AI infrastructure as their post-oil economic strategy. Middle East data center capacity is projected to triple from 1 gigawatt to 3.3 gigawatts in five years. Saudi Arabia just earmarked $40 billion for AI investments and declared 2026 the “Year of Artificial Intelligence.”

This is the likely outcome of this proposal as well – facilities will simply continue to be built in friendlier jurisdictions with weaker environmental standards. It may slow data center growth somewhat, but it wouldn’t stop AI development – and we’d lose the leverage that local ownership confers. If the proposal inspired copycat legislation in blue states and localities, the effect would be the same: shifting data centers to more conservative and loosely regulated communities.

The bill tries to preempt that international shift by imposing export controls on computing infrastructure to countries lacking comparable AI regulations – but this raises its own problems. In conditioning chip exports on this set of domestic priorities, many of which have nothing to do with AI safety, we impose a strange form of American-centric, tech policy universalism.

The United States does retain significant leverage over high-end chip supply chains – leverage that, if deployed strategically, could support substantive international AI safety coordination. But that leverage is slowly eroding. In the short run, export controls help us maintain our international lead, and that matters.But as countries like China have demonstrated, in the long run, sustained export controls can accelerate chip efficiency and supply chain independence efforts abroad. We should not spend our narrow lead pushing a hodgepodge of American labor and environmental priorities on unwilling partners.

An overbroad definition of “AI data center”

Data centers facilitate all kinds of digital activity – Netflix streaming, retail shopping, Instagram scrolling, online banking. AI represented about a quarter of all data center workloads in 2025, though that percentage stands to grow significantly.

Despite branding itself as an “AI data center” moratorium, the bill captures far more. Sanders’ definition includes any site used for AI model development at scale, as well as sites that exceed 20 megawatts of power capacity and deliver 20 kilowatts or more per rack. These features are not unique to frontier AI data centers; they also appear in enterprise and cloud-oriented data centers. This bill doesn’t disambiguate between these functionalities – and the average voter won’t either.

It’s supply skepticism all the way down

Read Sanders’s statement carefully, and you’ll notice that he doesn’t lead with the AI safety argument. He opens with environmental concerns and rising electricity costs, then he covers job loss, and finally he mentions AI safety risks.

That ordering reveals the real center of gravity here: general opposition to local construction. Sanders cites Denver’s moratorium as vindication of his proposal, but Denver’s pause was driven by more prosaic land use and electricity concerns, not AGI anxiety. Framing it as a win for AI safety retroactively imports existential framing onto what really amounts to a normal infrastructure fight. That same reactivity has helped produce the housing crisis and obstruct our clean energy buildout – but data centers offer an opportunity to break this pattern.

Data centers aren’t the only new loads coming onto the grid – electric vehicles and electrified manufacturing are also driving demand that requires more generation, more transmission, and long-overdue grid modernization. Many data centers are leaning on gas for near-term power, but data centers could serve as anchor tenants for new clean generation, fiber, battery storage, and transmission. Many companies are moving in that direction.

Industrial projects like these are also prompting pragmatic shifts on decarbonization from environmental groups. The Natural Resources Defense Council (NRDC), for instance, just supported its first nuclear project ever, to power a data center.

A moratorium forecloses exactly the kind of creative thinking these projects are beginning to generate.

Simply govern the actual technology

Even if a federal moratorium were feasible, the question remains – what do you plan to do with the time you’ve bought?

There are many ways elected officials could approach this. Congress could fund an empowered AI Safety Institute with the authority to conduct risk assessments, safety research, and mandatory evaluations of frontier models (going further than the current US CAISI). As AI researchers like Arvind Narayanan and Sayash Kapoor have argued, such institutions must assess risks not only at the model level, but in the context of deployment – making state capacity even more essential.

Congress could also instate aggressive chip export controls (independent of this bill’s other conditions), or pass whistleblower protections to support workers who notice specific dangers to public health or national security. And if lawmakers are serious about protecting workers from displacement – not just providing false assurances that it won’t happen – they should streamline and expand unemployment insurance, and support skilled trades that are labor intensive and more resilient to AI. All of these initiatives would do more to address the risks Sanders is worried about.

In messaging bills like this one, the second-order effects of a bill are the effects, and it is difficult to predict if they will help or hurt the cause of AI safety. A progressive-led moratorium could cement AI safety as a “left” issue precisely when it needs bipartisan legitimacy, or it could prompt Republicans to shift from ambiently co-signing the Trump administration’s accelerationist approach to doing more substantive thinking on AI governance.

It could inspire Democrats to propose their own AI governance and international coordination measures. But whether that’s good or bad depends on whether offices take this bill as a literal blueprint for AI governance, or as a symbolic shot across the bow to the AI labs.

Congress is generally a blinkered institution; lawmakers like Sanders deserve commendation for grappling with more diffuse and long-term risks. But lawmakers must honor that seriousness with remedies that actually address those risks.

Sanders was right in 1982 that the threat of nuclear annihilation demanded a strong response, and he’s right that AI demands one now. But AI demands a distinct approach, one that apprehends the reality of AI risk and the folly of substituting a moratorium for rigorous policymaking. If Congress wants to govern AI, it should. If it wants to protect communities from data center-related harms, it should. But it should not conflate these issues for expediency’s sake.

This article has been updated to reflect new legislation.

Nat Purser is a Senior Policy Advocate at Public Knowledge, where she focuses on artificial intelligence and broadband policy. The views expressed here are her own.

I think there's a case for a messaging bill like this from an AI safety perspective. Essentially it may be useful as an exercise in coalition building. It's introducing people who are worried about environmental or job market impacts to the concept of societal AI risks in a way that they might actually listen, since it's also taking their concerns seriously. It's seeding the idea that worrying about AI risk is a natural companion to worrying about environmental or job market impacts of AI.

A lot of politics works this way, where you have to stitch together groups that are coming from different starting points into a semi-coherent coalition. We see this in YIMBYism, for example, which bundles together free marketers, developers, urbanists, young renters in expensive cities, homeowners who want greater property rights (e.g. allowing ADU construction), and even socialists concerned about cost of living like Mamdani. Some of these folks detest one another: I doubt libertarians concerned about property rights have much love of Mamdani, and visa versa. YIMBYs have been good about avoiding alienating potential allies, and I think AI risk advocates should do the same.

In this case, hopefully this bill can get AI safety folks and anti-datacenter folks together in the same room, and hopefully the next iteration of the bill will include more of the kinds of ideas Nat lays out here.

Thank you for this comprehensive take on what Bernie Sanders is doing. I wasn't aware he's been fighting the uphill battle against AI data centers, but I'm glad he's doing it. To answer your question, "what would you do with the time bought", I would hope Congress and local leaders put into place proper protections - not only to benefit them, but also our environment and people. I love the initiatives you wrote about - I would hope they take action on those!